Crawling & learning process

In the previous section, you successfully linked all your company apps to Glean. Now, three essential background processes will take place, each crucial for optimizing Glean's functionality:

- Crawling - Glean fetches data from your connected apps.

- Indexing - Glean creates a model of the data that was fetched and incorporates it into your organization's search index.

- Learning - Glean processes the data that was fetched using Machine Learning (ML) to create a search and ranking algorithm tailored to your organization's data and users.

Timelines for completion

The time required to complete all three processes will vary depending on the size of your organization and the volume of content that Glean needs to process. The combined crawling and indexing processes can take approximately:

- Two to three days to complete for a typical small organization, or small volume of content.

- 10 to 14 days for a typical large organization, or large volume of content.

The machine learning (ML) process can take an additional two to 14 days, depending on:

- The GCP or AWS region that your Glean tenant was deployed to (and the tier of TPU/GPU hardware available in that region).

- The amount of content that needs to be processed as part of each ML workflow.

These times should be used as an estimate only.

Your Glean engineer will advise you once all crawling, indexing, and learning processes have been completed. The remainder of this document will cover these processes in more detail.

For now, you can proceed to the next step: Populate Content.

About crawling and indexing

When you initiate a crawl for a data source for the first time, the crawling and indexing processes are initiated. During this time, Glean will:

- Crawl the content (and associated permissions & activity metadata) for the selected data source.

- Create the Glean Knowledge Graph by indexing the crawled content, mapping it together, and creating a real-time model that can be referred to in response to a user's query.

Crawling is the process in which Glean fetches data from within your organization's sources of data for the purposes of creating the search index.

The Knowledge Graph is a real-time model of your organization's indexed information. It is a map that links all content, people, permissions, language, and activity within your organization. It is designed to provide users with the most personalized and relevant results for their queries in a matter of milliseconds.

Indexing is the process in which Glean makes content ready for display in search results by creating (or updating) your organization's Knowledge Graph: the mapping between all content, people, permissions, language, and activity in the company.

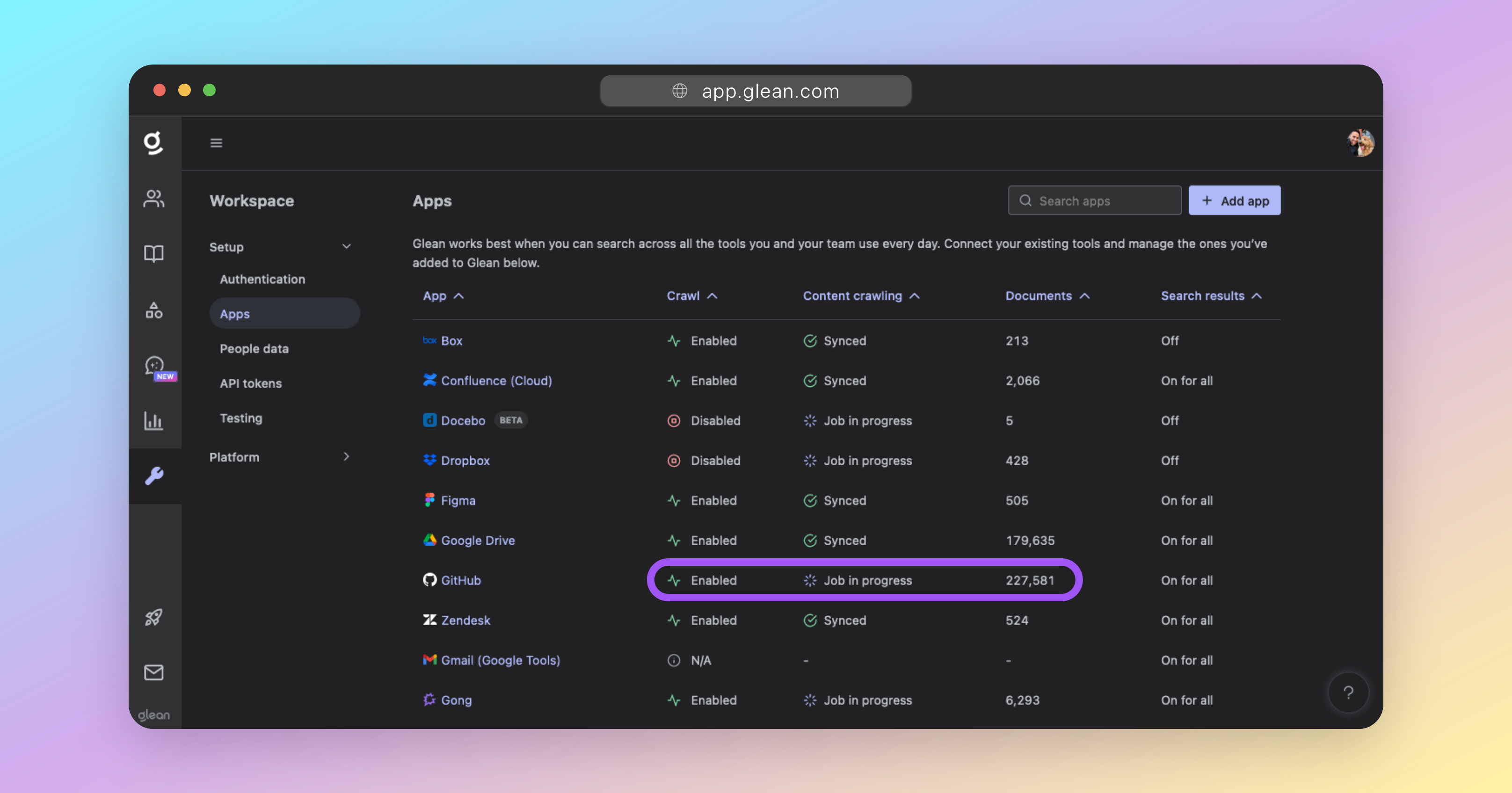

Check the crawling and indexing status

You can check the status of your in-progress crawls at any time by going to Admin Console → Platform → Data sources and reviewing the table of configured apps.

When a data source is undergoing its initial sync, it will appear under the Initial sync in progress section, which is split into two phases:

- Crawling (step one of two) - Glean is actively fetching content and metadata from the data source.

- Indexing (step two of two) - Glean is processing the crawled content and incorporating it into the Knowledge Graph.

Once both crawling and indexing are complete for a data source, it will move from Initial sync in progress to the All data sources section, where it will appear alongside other fully-synced sources.

For each data source, you'll see:

- Items synced - The total number of items (documents, messages, files, etc.) that have been crawled and indexed. If a document has been crawled and indexed, it's visible in Glean.

- Change rate (items/day) - The number of changes (edits, additions, deletions) synced in the past 24 hours, reflecting ongoing freshness after initial sync completes.

Status and metrics refresh on an hourly cadence. If you don't see immediate updates after making changes, check back in about an hour.

Check the status of your in-progress crawls at any time by going to Admin Console → Platform → Data sources

Crawling and Indexing FAQ

About machine learning

Once the crawling and indexing processes have been completed, Glean will initiate several Machine Learning (ML) workflows that will run on all indexed content.

The ML process is critically important and is responsible for:

- Optimizing search query understanding and spellcheck.

- Understanding synonyms, acronyms, and semantics used in documents and between employees within your organization.

- Enhancing relevance rankings for search results and people suggestions.

- Enabling query suggestions, predictive text, and autocomplete.

- Training the unique language model for your organization; which is essential for operation of Glean.

Usage of Glean is not supported until the ML process has completed successfully. You should not allow users access to Glean until all ML has completed.

Check the machine learning status

The ML workflows are background processes. It is not currently possible to check the status of these inside the Glean UI.